AI is Changing the Shape of Software

AI is no longer something you add at the end of a project. It’s becoming part of how software actually works: how it makes decisions, adapts to users, and improves over time.

The problem is that most systems weren’t built for this. Traditional architectures assume predictability: clear inputs, stable logic, and consistent outputs. AI breaks that pattern. Results can vary, behavior depends heavily on data, and systems need to evolve continuously instead of staying fixed.

That’s why so many AI projects struggle when they move beyond experimentation. It’s rarely the model that fails, it’s the system around it. In fact, a large percentage of AI initiatives never reach production or fail to deliver value because the underlying architecture can’t support real-world usage

In modern software projects, the real challenge isn’t adding AI. It’s building systems that can actually handle it.

What “AI-Ready” Actually Means

“AI-ready” gets used a lot, but it often means different things to different teams. In practice, it’s not about having a model in production or integrating an API. It’s about building systems that can evolve alongside AI.

An AI-ready architecture can continuously work with data, support frequent model updates, and still remain stable, even when outputs are not always predictable.

At the center of all this is data. AI systems depend entirely on it. If data is inconsistent, incomplete, or poorly managed, everything else starts to break down. This is one of the main reasons AI projects fail. Data preparation alone can take up to 60–80% of the effort in successful implementations

And even then, many organizations are not fully prepared. Research shows that only a small percentage of companies have the data infrastructure needed to properly support AI at scale.

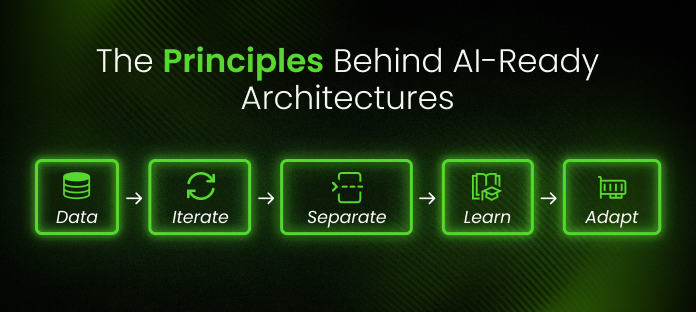

The Principles Behind AI-Ready Architectures

1. It Starts With Data

In AI systems, data isn’t just something you store, it’s something you continuously process, validate, and improve.

Many issues that appear to be “model problems” are actually data problems. Poor data quality, inconsistent pipelines, or missing validation can quickly lead to unreliable outputs. In fact, data-related issues are responsible for the majority of AI failures in production environments.

That’s why data pipelines are no longer secondary components. They are a core part of the architecture.

2. Think in Terms of Iteration, Not Completion

Traditional software has a clear end point: you build it, test it, and ship it.

AI systems don’t really work like that. They improve over time. Models are retrained, evaluated, and refined continuously. This is where MLOps comes in. It brings practices like continuous integration, deployment, and monitoring into AI systems, making it possible to update models safely in production. In this context, “done” is never really done.

3. Training and Inference Are Not the Same Thing

Another common mistake is treating training and inference as part of the same process. Training is heavy, slow, and experimental. Inference is fast, real-time, and user-facing. Trying to handle both in the same way usually leads to performance issues. Modern AI systems separate these concerns early, allowing each part to scale independently and work more efficiently.

4. Systems Need Feedback to Improve

AI systems don’t just run, they learn. And for that, they need feedback. User interactions, corrections, and real-world outcomes all help improve model performance over time. Without this feedback loop, systems quickly become outdated.

This is why continuous monitoring and retraining are now considered essential parts of AI system design.

5. You Have to Design for Uncertainty

AI outputs are not always correct. That’s just part of how these systems work. Instead of trying to eliminate uncertainty, good architectures make it visible and manageable. That means monitoring outputs, detecting drift, and building fallback mechanisms when things don’t go as expected. This is also one of the main reasons MLOps has become so important, it helps teams keep systems reliable even as models evolve.

What an AI-Ready Architecture Actually Looks Like

While implementations vary, most AI-ready systems follow a similar structure. It starts with a solid data layer, where information is collected, processed, and validated. If this layer is weak, everything built on top of it becomes unstable.

On top of that sits the model layer, where models are trained, versioned, and deployed. This is often where teams struggle most. Moving from experimentation to production requires much more structure than expected. Then comes the application layer, where AI interacts with users and business logic. This is where architectural decisions directly impact usability and trust.

Finally, there’s the operational layer, MLOps, which ties everything together. It ensures models are deployed, monitored, retrained, and governed over time. Without it, even well-built systems tend to degrade.

Where Things Usually Go Wrong

Most teams run into similar problems. AI is treated as just another feature, instead of something that affects the entire system. Data pipelines are added too late, once issues already appear. Systems become overly complex before real usage is understood. And production behavior is often underestimated.

There’s also a recurring organizational issue: ownership. Data, engineering, and product teams often work separately, without clear alignment. This fragmentation is one of the main reasons AI projects fail to scale or deliver long-term value

How to Get There (Without Rebuilding Everything)

The good news is that becoming AI-ready doesn’t mean starting from scratch. In most cases, it begins with understanding your current system: how data flows, where decisions are made, and where AI can realistically add value.

From there, improvements happen step by step. Data pipelines become more structured. Models are separated from core logic. Monitoring is introduced. Systems become easier to evolve. The key is to build gradually and scale based on real usage, not assumptions.

The Systems That Adapt Will Win

AI changes more than just functionality. It changes how software evolves. We’re moving from static systems to adaptive systems that learn from data and improve over time. That requires a different kind of architecture. One that can handle change without breaking.

In the end, the systems that succeed won’t be the ones that adopt AI the fastest. They’ll be the ones designed to adapt as it evolves. That’s what it really means to be AI-ready.